If your performance management system looks more complete on paper than it feels in practice — you’re not alone, and the gap between the two is exactly where improvement stalls.

Read MoreInsights

Integrated Performance

Why Your Organization Has a Performance Management System but Hasn't Actually Matured

If your performance management system looks more complete on paper than it feels in practice — you’re not alone, and the gap between the two is exactly where improvement stalls.

If you’ve invested in a performance management framework, defined your KPI system, documented your processes, and still find that performance conversations are infrequent, dashboards go unread, and improvement initiatives close without anyone learning anything — the problem is probably not your framework, but the gap between having a framework and embedding it as a system of behavior and decision-making. This is one of the most consistent patterns observed across maturity assessments in the GCC and beyond.

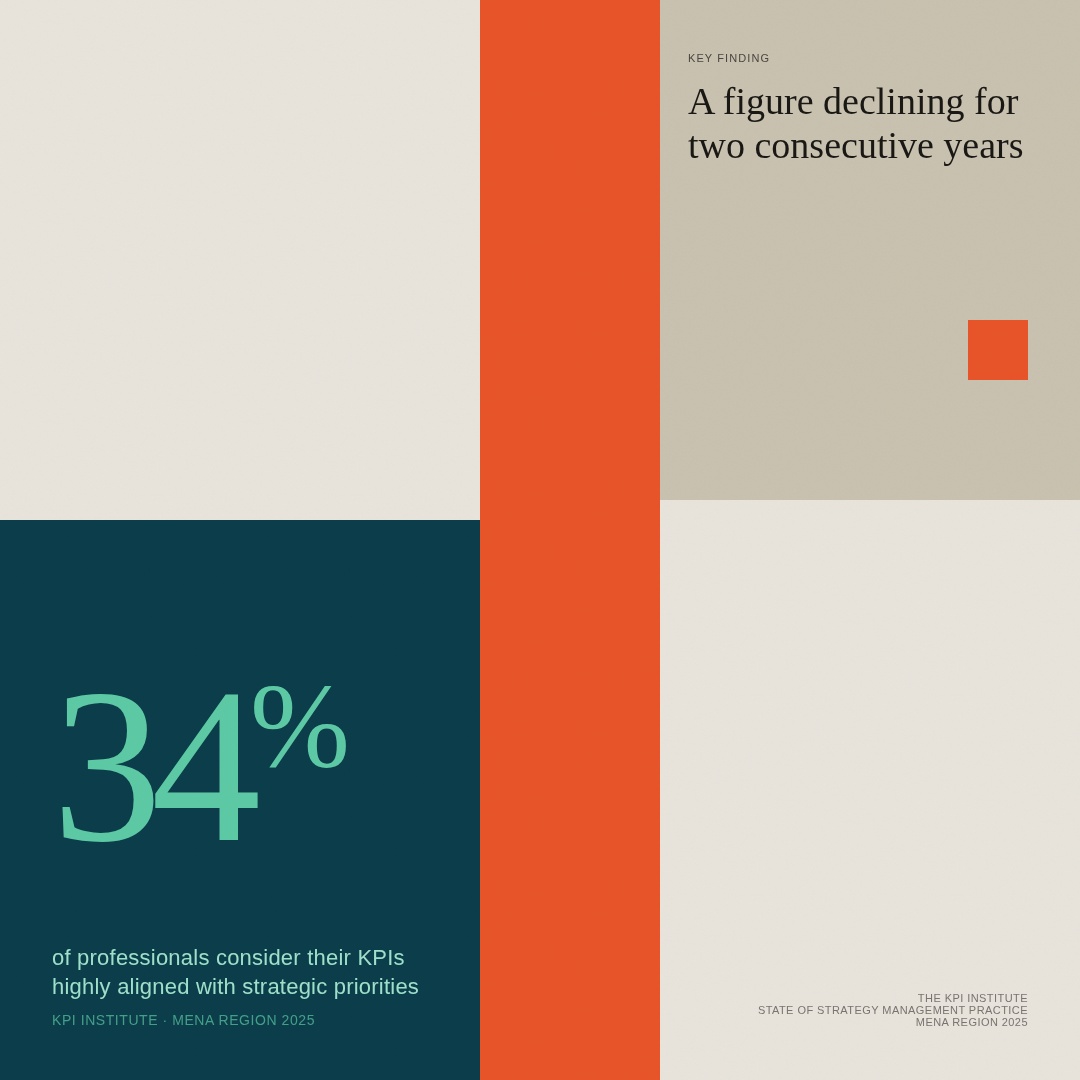

The numbers make this hard to argue with. According to The KPI Institute's State of Strategy Management Practice — MENA Region 2025 Report — the most comprehensive study of its kind in MENA — found that only 34% of professionals consider their KPIs highly or very highly aligned with strategic priorities, a figure that has been declining for two consecutive years, and that 78% of professionals are familiar with cases where strategy execution has failed in their organization, up significantly from the previous year. These are not organizations that lack frameworks, but companies where the framework hasn't translated into the behavior and integration that maturity actually requires.

Understanding why that gap persists — and what it takes to close it — is what this deep dive is about.

The adoption gap — and why it keeps appearing

The pattern worth naming here is that adoption and embedding tend to get treated as the same step, when in practice they are quite different. A framework gets adopted when an organization maps its capabilities, runs an assessment, and produces a score and a roadmap — but it gets embedded when the practices it describes change how people actually make decisions, have conversations, and behave under pressure, which requires conditions that no framework can create on its own.

What tends to happen instead is that the framework becomes an artifact — something the organization has rather than something it does — and the four gaps below are where that drift usually occurs.

The capability versus usage gap

The first gap, and arguably the most common, is the distance between having a capability and actually using it in decisions, which is not always visible in a maturity score. Organizations can score reasonably well on performance measurement maturity while their dashboards are being reviewed once a quarter by a team with no real authority to act on what they see — the KPIs are defined, documented, and tracked, but they aren't driving anything.

The MENA research puts a specific shape to this. Strategy awareness follows a sharp hierarchical gradient: 64% of executives rate their awareness of organizational strategy as good or very good, but that figure falls to 47% among middle management and drops to just 25% among employees, with 37% of employees describing their awareness as poor or very poor. The framework exists at the top. It doesn't consistently reach the people responsible for executing it, and an organization where 75% of its workforce lacks a clear understanding of the strategy cannot be said to have embedded performance management, regardless of what its planning documents contain.

The tooling versus integration gap

The second gap tends to show up in organizations that have invested seriously in technology, and it is worth pausing on because it can be easy to mistake for maturity. Tools get deployed effectively within their own function — dashboards are built, HR systems are configured, planning platforms are set up — but the performance measurement system often doesn't talk to the strategic planning process, and the system tracking individual objectives isn't always connected to the corporate scorecard, which means decisions get made from whichever system the decision-maker happens to trust most.

The MENA research offers a pointed observation here: 47% of professionals rate their data skills as basic or below, and the report notes explicitly that tools are only as effective as the people using them, which is a finding that reframes the tooling question entirely. The issue is not whether dashboards exist, but whether the organizational conditions for using them meaningfully are in place — and those conditions are harder to build than the dashboards themselves. Separately, the research found that the proportion of professionals who agree that projects and processes are aligned with strategic objectives dropped from 61% to 51% in a single year, suggesting that even where tools and frameworks are in place, integration is getting harder rather than easier.

The structural versus behavioral gap

This is perhaps the most important gap, and also the one that frameworks are least equipped to close on their own. Most maturity models assess whether the right structures exist — whether roles are defined, processes are documented, governance is in place — but what they are less naturally good at capturing is whether any of that structure has changed how people actually act, which is ultimately where performance lives.

A well-documented performance review process that managers complete reluctantly, in the minimum time required, to satisfy a compliance cycle — that is not, in practice, a mature performance management system, but something closer to a mature filing system. The MENA research reflects this behavioral gap directly: only 39% of executives agree that managing change is a strength within their organization, and that figure drops to 27% among managers, 25% among team leaders, and just 19% among non-managerial staff. The decline is not incidental — it reflects a consistent pattern where optimism about organizational capability concentrates at the top and dissipates toward the people most responsible for making it real. GPAU's own research adds a complementary finding: 29% of performance professionals identify performance culture as a key barrier to achieving consistent results, not the absence of frameworks, but the culture in which those frameworks operate.

The assessment versus transformation gap

The fourth gap is worth naming plainly, because it tends to go unacknowledged: maturity models are genuinely good at diagnosis, and less naturally good at driving the transformation that follows, which is not a flaw so much as an honest limitation of what an assessment tool can do. The MENA data is instructive here — 68% of professionals rate themselves as having basic or inexperienced KPI selection skills, and only 17% consider their organization highly agile, which means that even where frameworks are in place, the human capability to operationalize them is often not — and a roadmap is of limited use to an organization that doesn't yet have the skills to walk it.

Organizations can leave a well-conducted assessment with a detailed roadmap and still find themselves reassessing at roughly the same level two years later, because knowing where you are is not the same as knowing what conditions are required to move, and that second question tends to get less attention than the score itself.

What embedding actually requires

For any capability to be sustainable — not just present at a moment of assessment, but durable across personnel changes and shifting priorities — certain enabling conditions need to exist alongside the practice itself, and this is what tends to get underweighted in improvement planning.

In GPAU's maturity framework, each of the five capabilities includes a dedicated Enablers dimension that assesses exactly these conditions: whether governance exists around the capability, meaning clear roles and responsibilities for who owns and maintains it; whether the function is supported by appropriate technology; whether practices are formalized in a way that doesn't depend on particular individuals; whether communication is structured; and whether the organization is actively building skills in that area — which together form a picture of whether the capability is institutional or just personal.

The reason this matters is that an organization can demonstrate a capable practice and still score poorly on Enablers, and that combination is a specific signal that the capability is present but fragile. A strategy team in a GCC government authority might run a sophisticated planning process — but if that process depends almost entirely on one high-performing unit and the enabling conditions around it are weak, a reorganization or leadership transition can quietly undo years of apparent progress, which a practice score alone wouldn't have predicted.

Enablers doesn't resolve the behavioral gap — no framework assessment fully can, because behavior requires culture and leadership, which can be assessed but not installed — but what it does is make the diagnosis more specific and more honest. An organization that scores well on practice but poorly on Enablers knows something actionable: the capability exists, but it hasn't been institutionalized yet, which is a different and more useful improvement conversation than a general recommendation to increase maturity.

Self-assessment: where does your organization actually stand?

Use this checklist to test whether your framework is embedded or just present. For each statement, mark whether it is consistently true, partially true, or not yet true in your organization.

Capability vs. usage

Tooling vs. integration

Structure vs. behavior

Assessment vs. transformation

If most of your answers are “partially true” or “not yet” — particularly in the behavioral and Enablers categories — that’s not a failure of the framework. It’s a signal that the enabling conditions haven’t been built yet. That’s the conversation a structured assessment can help you start. To see where your organization stands, visit gpaunit.org.

Frequently asked questions

What is the difference between performance management maturity and performance management adoption? Adoption means a framework has been implemented and capabilities have been assessed — but maturity means those capabilities are embedded in how decisions are made and how people behave, and the two can look similar from the outside while being quite far apart in practice.

Why do maturity scores sometimes stay flat across reassessments? Most commonly because the improvement effort focused on the practice — the process, the documentation, the tool — without addressing the enabling conditions that make that practice sustainable, where governance, skills, communication, and technology integration need to develop alongside the practice itself rather than after it.

What does the Enablers dimension assess in GPAU's framework? For each of the five capabilities, Enablers assesses whether governance structures are in place, whether appropriate technology supports the function, whether practices are formalized and documented, whether communication is structured, and whether skills are being actively developed — and a low Enablers score alongside a higher practice score is a signal that the capability is fragile rather than institutionalized.

How long does it typically take to move from one maturity level to the next? It depends on the capability and the starting level, but organizations that address both practice and enabling conditions tend to move more durably than those that focus on one without the other — the transition from Level 2 to Level 3 is typically more about structure and consistency, while from Level 3 to Level 4 the enabling conditions tend to become the primary lever.

Where should an organization start if it wants to close the adoption gap? With an honest assessment of whether the capabilities it believes it has are actually being used in decisions — and whether the enabling conditions for those capabilities are institutional or personal, because the gap between those two questions is usually where the real work is.